softmax(2)--从零实现

目录

注意

本文主要记录代码,优化细节,添加注释。

数据加载

import torch

from IPython import display

from d2l import torch as d2l

batch_size = 256

train_iter, test_iter = d2l.load_data_fashion_mnist(batch_size)

其中

load_data_fashion_mnist由下面给出,主要返回训练集和测试集的DataLoader对象,是一个iterator,每次随机从数据集中取batch_size个数据

def load_data_fashion_mnist(batch_size, resize=None): #@save

"""下载Fashion-MNIST数据集,然后将其加载到内存中"""

trans = [transforms.ToTensor()]

if resize:

trans.insert(0, transforms.Resize(resize))

trans = transforms.Compose(trans)

mnist_train = torchvision.datasets.FashionMNIST(

root="../data", train=True, transform=trans, download=True)

mnist_test = torchvision.datasets.FashionMNIST(

root="../data", train=False, transform=trans, download=True)

return (data.DataLoader(mnist_train, batch_size, shuffle=True,

num_workers=get_dataloader_workers()),

data.DataLoader(mnist_test, batch_size, shuffle=False,

num_workers=get_dataloader_workers()))

模型参数

num_inputs = 784

num_outputs = 10

W = torch.normal(0, 0.01, size=(num_inputs, num_outputs), requires_grad=True)

b = torch.zeros(num_outputs, requires_grad=True)

输入为28x28的图像,输出为十个类别,参数W设置为均值为0标准差为0.01的矩阵

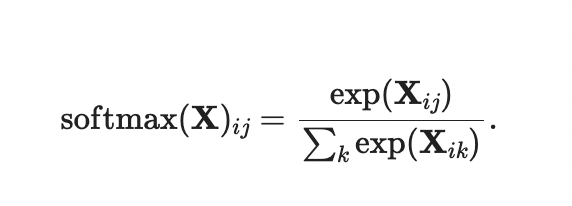

softmax实现

实现softmax由三个步骤组成:

- 对每个项求幂(使用

exp); - 对每一行求和(小批量中每个样本是一行),得到每个样本的规范化常数;

- 将每一行除以其规范化常数,确保结果的和为1。

def softmax(X):

X_exp = torch.exp(X)

partition = X_exp.sum(1, keepdim=True)

return X_exp / partition # 这里应用了广播机制

正如上述代码,对于任何随机输入,[我们将每个元素变成一个非负数。 此外,依据概率原理,每行总和为1]。

模型定义

def net(X):

return softmax(torch.matmul(X.reshape((-1, W.shape[0])), W) + b)

这个模型就是在全联接层后面套上一个softmax

损失函数

def cross_entropy(y_hat, y):

return - torch.log(y_hat[range(len(y_hat)), y])

本质就是将正确答案对应的输出取

-log,注意这里对y_hat的索引方式

分类精度

def accuracy(y_hat, y): #@save

"""计算预测正确的数量"""

if len(y_hat.shape) > 1 and y_hat.shape[1] > 1:

y_hat = y_hat.argmax(axis=1) # 每一行的最大值所在的索引

cmp = y_hat.type(y.dtype) == y # 预测正确则为1

return float(cmp.type(y.dtype).sum())

评估精度

def evaluate_accuracy(net, data_iter): #@save

"""计算在指定数据集上模型的精度"""

if isinstance(net, torch.nn.Module):

net.eval() # 将模型设置为评估模式

metric = Accumulator(2) # 正确预测数、预测总数

with torch.no_grad():

for X, y in data_iter:

metric.add(accuracy(net(X), y), y.numel()) # a是正确的个数,b是总数

return metric[0] / metric[1]

class Accumulator: #@save

"""在n个变量上累加"""

def __init__(self, n):

self.data = [0.0] * n

def add(self, *args):

self.data = [a + float(b) for a, b in zip(self.data, args)] # 累加,每次都是将原先的data加上新的b

def reset(self):

self.data = [0.0] * len(self.data)

def __getitem__(self, idx):

return self.data[idx]

训练

num_epochs, lr = 5, 0.1

# 本函数已保存在d2lzh_pytorch包中方便以后使用

num_epochs, lr = 5, 0.1

# 本函数已保存在d2lzh_pytorch包中方便以后使用

def train_ch3(net, train_iter, test_iter, loss, num_epochs, batch_size,

params=None, lr=None, optimizer=None):

for epoch in range(num_epochs):

train_l_sum, train_acc_sum, n = 0.0, 0.0, 0

for X, y in train_iter:

y_hat = net(X)

l = loss(y_hat, y).sum()

# 梯度清零

if optimizer is not None:

optimizer.zero_grad()

elif params is not None and params[0].grad is not None:

for param in params:

param.grad.data.zero_()

l.backward()

if optimizer is None:

d2l.sgd(params, lr, batch_size)

else:

optimizer.step() # “softmax回归的简洁实现”一节将用到

train_l_sum += l.item()

train_acc_sum += (y_hat.argmax(dim=1) == y).sum().item()

n += y.shape[0]

test_acc = evaluate_accuracy(net,test_iter) ## 注意与书中函数位置相反

print('epoch %d, loss %.4f, train acc %.3f, test acc %.3f'

% (epoch + 1, train_l_sum / n, train_acc_sum / n, test_acc))

train_ch3(net, train_iter, test_iter, cross_entropy, num_epochs, batch_size, [W, b], lr)

预测

X, y = iter(test_iter).next()

true_labels = d2l.get_fashion_mnist_labels(y.numpy())

pred_labels = d2l.get_fashion_mnist_labels(net(X).argmax(dim=1).numpy())

titles = [true + '\n' + pred for true, pred in zip(true_labels, pred_labels)]

d2l.show_fashion_mnist(X[0:9], titles[0:9])

走一遍前向即是预测